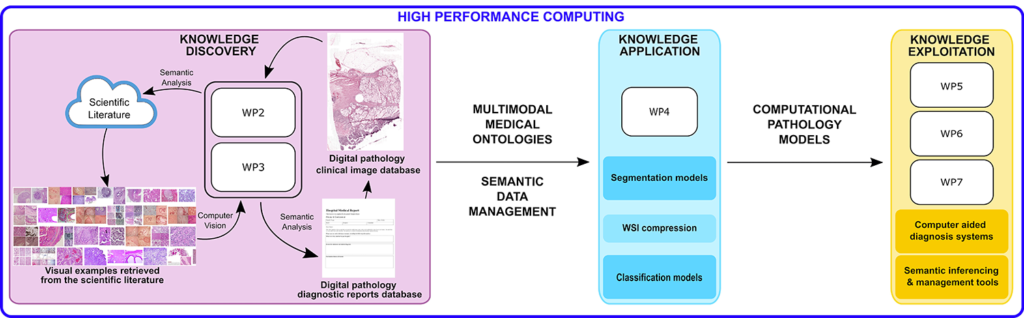

Exascale volumes of diverse data from distributed sources are continuously produced. Healthcare data stand out in the size produced (production 2020 >2000 exabytes), heterogeneity (many media, acquisition methods), included knowledge (e.g. diagnostic reports) and commercial value. The supervised nature of deep learning models requires large labelled, annotated data, which precludes models to extract knowledge and value.ExaMode solves this by allowing easy & fast, weakly supervised knowledge discovery of heterogeneous exascale data provided by the partners, limiting human interaction. Its objectives include the development and release of extreme analytics methods and tools that are adopted in decision making by industry and hospitals. Deep learning naturally allows to build semantic representations of entities and relations in multimodal data. Knowledge discovery is performed via semantic document-level networks in text and the extraction of homogeneous features in heterogeneous images. The results are fused, aligned to medical ontologies, visualized and refined. Knowledge is then applied using a semantic middleware to compress, segment and classify images and it is exploited in decision support and semantic knowledge management prototypes.

ExaMode is relevant to ICT12 in several aspects:

- Challenge: it extracts knowledge and value from heterogeneous quickly in-creasing data volumes.

- Scope: the consortium develops and releases new methods and concepts for extreme scale analytics to accelerate deep analysis also via data compression, for precise predictions, support decision making and visualize multi-modal knowledge.

- Impact: the multi-modal/media semantic middleware makes heterogeneous data management & analysis easier & faster, it improves architectures for complex distributed systems with better tools in-creasing speed of data throughput and access, as resulting from tests in extreme analysis by industry and in hospitals.